What Is Proof of Authority?

Learn what Proof of Authority is, how PoA consensus works, why it is fast and cheap, and the tradeoffs it makes in trust, governance, and decentralization.

Introduction

Proof of Authority is a way for a blockchain to decide who gets to add the next block when the network does not want open participation from everyone. That sounds like a small design choice, but it changes the entire character of the system. In Bitcoin, the question is how strangers can compete without trusting each other. In Proof of Authority, the question is different: if the block producers are already known, how can they coordinate efficiently without turning the chain into an ordinary shared database run by a single administrator?

That difference explains both the appeal and the unease around PoA. It is appealing because open, adversarial consensus is expensive. Proof of Work burns computation. Proof of Stake needs stake management, validator economics, and broader coordination around a large participant set. If you already know which organizations are supposed to operate the chain, much of that machinery can look unnecessary. But the unease is real too, because PoA gets its efficiency by narrowing who is allowed to participate. The system becomes easier to operate partly because it is more centralized.

The core idea is simple: **a PoA network gives block production rights to a limited set of authorized validators, often called authorities or signers, whose identities are known and whose continued participation depends on reputation, governance, or both. ** Instead of spending electricity or locking capital to prove they deserve a turn, they prove it by being on the approved list and by signing blocks correctly.

That is the compression point for the whole topic. A blockchain always needs a way to resist Sybil attacks, meaning attacks where one party pretends to be many parties. PoW does that with scarce computation. PoS does it with scarce economic stake. PoA does it with scarce permission. You cannot simply appear as a thousand validators, because validator status is granted by the system’s governance, not self-declared.

Once that clicks, the rest follows naturally: PoA tends to be fast because only a few validators matter, cheap because there is no mining race, useful in private or consortium settings because participants are already known, and controversial in public settings because the trust model depends heavily on who those validators are and how they can be replaced.

What problem does Proof of Authority solve?

A blockchain is not just a database with signatures. Its harder problem is coordination under disagreement. Different nodes may receive transactions in different orders, some nodes may be offline, and some participants may act maliciously. Consensus is the mechanism that makes these nodes converge on one history.

In a fully open network, the difficulty is not only agreeing on order. It is agreeing on order without first agreeing on who counts. If anyone can join pseudonymously, then a malicious actor can create many identities and try to dominate the network. That is why open blockchains need a Sybil-resistance mechanism. The system has to make influence expensive or otherwise constrained.

PoA starts from a different premise. In many real deployments, the participants are not anonymous internet users. They are known companies in a consortium, infrastructure operators of a testnet, or a foundation-approved group running a network where open validator admission is not the goal. In that setting, paying the cost of a fully permissionless consensus mechanism may not buy much. If the validator set is going to be curated anyway, a direct authority model can be more practical.

So the problem PoA solves is not “how do we secure a blockchain for a hostile, permissionless world at maximum decentralization?” It is closer to: “how do we get blockchain-style shared state and multi-party control when the block producers are known in advance?” That narrower goal is why PoA is common in private chains, local development networks, and some public networks that prioritize performance and operational control over open validator access.

How does Proof of Authority replace mining or staking with authorized signers?

At the mechanical level, PoA removes the mining or staking contest and replaces it with an approved signer set. A block is valid not because someone did expensive work or because a weighted committee attested with stake-backed votes, but because it was produced by an address that the network currently recognizes as authorized.

That does not mean “anything signed by an authority is automatically fine.” The normal block validity rules still apply. Transactions must be valid, state transitions must be correct, and the block must fit whatever timing and sequencing rules the consensus engine requires. What PoA changes is the right to propose or seal the block in the first place.

A good mental model is a meeting with a fixed roster. In an open crowd, you need a procedure for deciding who gets the microphone without letting one person impersonate a hundred attendees. In a board meeting, the problem is simpler because there is already a membership list. You still need rules about turn-taking, quorum, and replacing members, but you do not need to solve identity from scratch every time. PoA is blockchain consensus for something more like the second case than the first.

The analogy helps explain the mechanism, but it fails in an important way. A blockchain still operates over an unreliable network with independent machines and possible adversaries. So PoA is not merely a club with a roster; it still needs protocol rules to handle forks, delayed messages, missed turns, and malicious insiders.

How are blocks produced in Proof-of-Authority networks?

Most PoA systems assign block production to authorized validators in a structured way rather than letting all of them race freely. A common pattern is round-robin scheduling: validator A is expected to produce one block, then validator B, then validator C, and so on. This keeps block timing predictable and avoids needless duplication.

But real networks are messy. A validator may be offline, its clock may drift, or its block may arrive late. So many PoA designs allow some flexibility: another authorized validator may produce an out-of-turn block if the expected proposer does not. This preserves liveness, meaning the chain can keep moving even when some authorities fail.

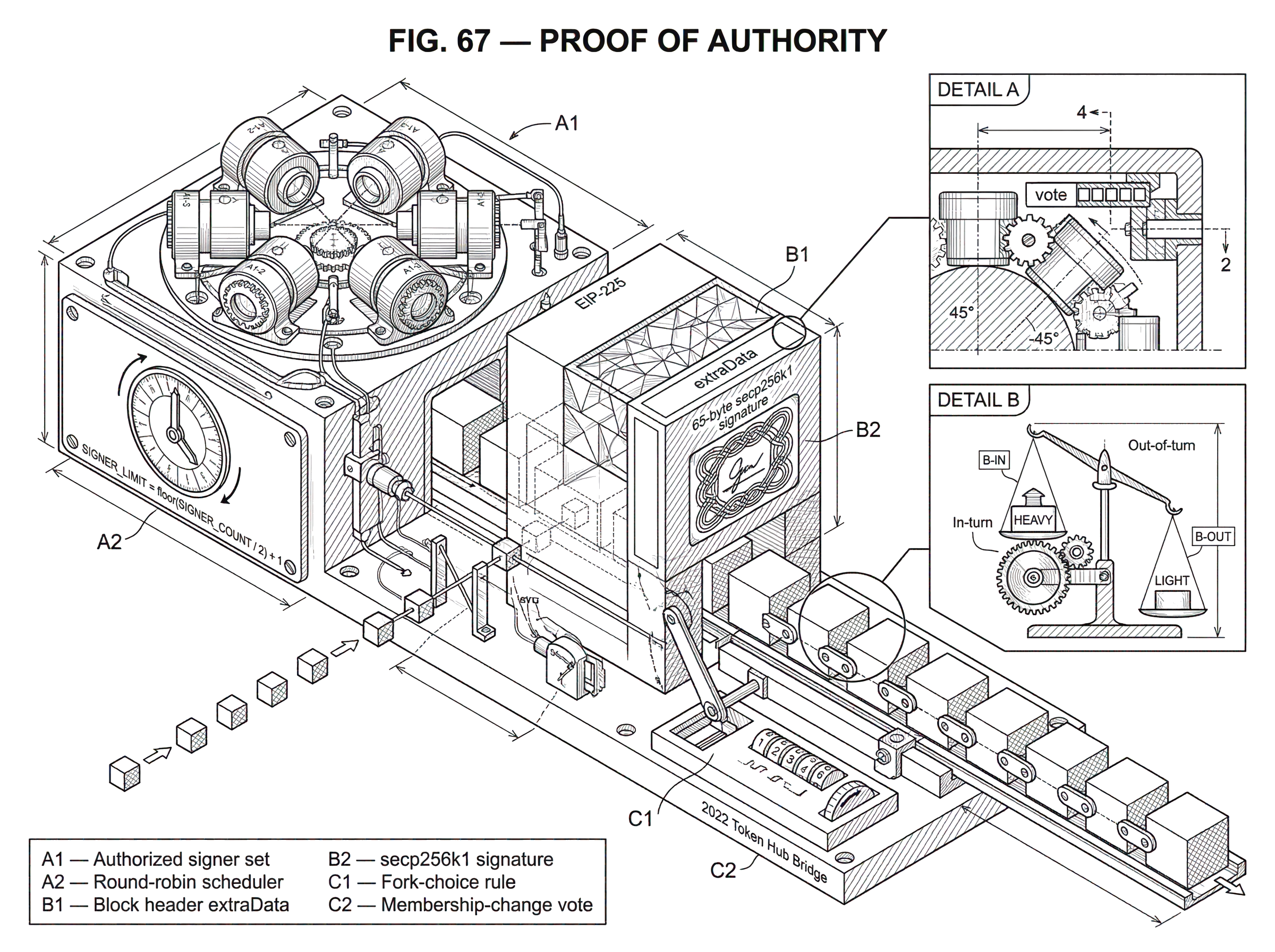

That flexibility creates a new problem. If multiple authorities can sometimes produce competing blocks, the network needs a fork-choice rule. Clique, the best-known Ethereum PoA design specified in EIP-225, handles this by favoring in-turn blocks over out-of-turn blocks. It encodes that preference through block difficulty values: an in-turn block gets a higher weight than an out-of-turn one. Nodes then prefer the heavier branch.

Here is the mechanism in ordinary language. Suppose there are five authorized signers. At a given height, signer 3 is expected to produce the next block. If signer 3 does so on time, everyone sees a strong signal: this is the intended continuation. If signer 3 is offline and signer 4 steps in, the chain can still advance, but that advance carries a weaker signal because it was not the scheduled signer’s turn. Over time, this bias helps the network converge on one branch without needing a mining race.

Clique also limits how frequently the same signer may create blocks. In the specification, a signer may sign at most one block out of SIGNER_LIMIT consecutive blocks, where SIGNER_LIMIT is floor(SIGNER_COUNT / 2) + 1. The reason is straightforward: if one authority’s key is compromised, the protocol should not let that one signer flood the chain with block after block. Rate limiting does not eliminate the risk of a compromised authority, but it caps how much damage a single signer can do before others can respond.

How do PoA networks track and change their authorized validators?

| Model | Where stored | Change method | Strength | Weakness |

|---|---|---|---|---|

| Static genesis | Genesis config | Requires manual update | Very simple, fast sync | No live updates |

| Header votes (Clique) | Block headers extra-data | Embedded header votes | Light sync friendly | Limited voting expressivity |

| Contract-managed | On-chain contract state | Transactions to update | Flexible governance | Heavier sync required |

A PoA system is only as meaningful as its authority list. The protocol therefore needs a clear answer to two questions: who is currently authorized, and how does that list change?

The simplest approach is a static list written into the genesis configuration. Many private and development networks work this way. The initial chain specification names the validator addresses, and only those addresses may sign blocks. This is operationally simple, which is one reason PoA is popular for local networks and controlled environments.

But a real network cannot assume the validator set never changes. Keys get rotated, operators go offline, organizations leave the consortium, and new ones join. So PoA designs usually include a membership-change mechanism. In Clique, this is done through votes embedded in block headers rather than through separate staking logic. An authorized signer can use fields in the block header to propose adding or removing another signer. Other signers include their own votes in later blocks, and nodes tally the current votes. Once the proposal reaches the threshold, the signer set changes.

This design choice matters more than it first appears. EIP-225 deliberately keeps the relevant consensus information in block headers so that clients can verify the authority state from headers alone. That supports fast and light synchronization more naturally than a design that would require replaying smart contract state just to know who the validators are. In Clique, the header’s extraData includes a 65-byte secp256k1 signature, which lets clients recover the signer’s identity directly from the block.

The same specification repurposes other header fields for governance. The beneficiary field names the account being voted on, and the nonce is set to a special value to mean “authorize” or “deauthorize.” This may look odd if you are used to those fields serving other roles, but the logic is pragmatic: the protocol wanted a PoA scheme that fit into Ethereum’s existing header model with minimal disruption.

There is also an operational subtlety here. Votes cannot accumulate forever without bound, or an attacker could spam governance proposals and burden clients with unending historical baggage. Clique addresses this with epochs. At epoch boundaries, pending votes are flushed, and the current signer list is checkpointed in the block header extra-data. That creates a reset point that new or syncing nodes can trust without replaying every ancient vote.

Example: How do validator rotations and votes work in a PoA chain?

Imagine a consortium chain run by four logistics companies. At launch, the authorized signers are addresses controlled by NorthPort, Atlas Freight, BlueRail, and Meridian Storage. Their nodes take turns producing blocks every few seconds. For months, the network works smoothly because each company keeps one validator online and the signer list rarely changes.

Then Meridian decides to rotate its infrastructure. Its old signing key is being retired, and a new key must be authorized. In a PoA system like Clique, Meridian cannot simply start signing from the new address and expect the network to accept it. The protocol has to change the recognized authority set.

So NorthPort includes a vote in its next block to authorize Meridian’s new address. Atlas Freight does the same in a later block. Once enough current signers have voted (under Clique, a majority threshold tied to the signer count) the proposal takes effect immediately. From that point on, the new Meridian address is valid as a signer.

Now imagine BlueRail’s validator begins misbehaving, perhaps because its key was leaked or its node software is faulty. The remaining authorities start including votes to remove BlueRail’s signing address. As the threshold is reached, BlueRail is dropped from the active signer set. The important point is not just that membership changed, but **that membership changed through the same consensus machinery that secures the chain. ** The governance is not outside the protocol; it is expressed through it.

Some PoA systems use more elaborate validator-set management. OpenEthereum’s authority-based engines, including Aura, support static validator lists, contract-managed validator sets, and block-indexed transitions. That means the authority list can be fixed in genesis, updated via contract logic, or changed at defined block numbers. The common thread is that PoA needs a crisp, machine-verifiable answer to who is allowed to sign now.

Why is Proof of Authority faster and cheaper than PoW or PoS?

PoA’s performance advantages are not magical. They follow from the fact that the protocol is coordinating among fewer, known participants.

When only a small validator set is involved, the network can use shorter block times and simpler scheduling. There is no global mining competition, so the chain does not need to absorb wasted work from many unsuccessful block producers. There is also less need to economically compensate massive resource expenditure, which is why PoA networks are cheap to run compared with Proof of Work systems.

This is especially useful in environments where the blockchain is a coordination layer inside a business or developer workflow. A local development chain does not need permissionless mining. A consortium ledger for a supply chain does not necessarily want anonymous validators joining from anywhere on the internet. A testnet often wants predictability and low operational burden more than maximal decentralization. In all of these settings, PoA offers a cleaner fit.

That is why Ethereum ecosystem tooling long used PoA heavily for testnets and private deployments, and why engines such as Clique, Aura, and IBFT-style authority systems became common in enterprise and development contexts. It is also why authority-based ideas appear in public networks in modified forms. BNB Smart Chain, for example, uses Proof of Staked Authority, or PoSA, where a relatively small validator set is combined with stake-based selection. The point of that hybrid is to keep the operational advantages of a small authority set while adding a stronger economic admission and penalty structure.

Where does trust live in Proof-of-Authority systems?

The most common misunderstanding about PoA is to think that it removes trust. It does not. It moves trust.

In PoW, you trust that no attacker can cheaply command more computation than the rest of the network for long enough to rewrite history. In PoS, you trust that the validator and slashing design aligns economic incentives strongly enough, and that stake distribution is not too concentrated. In PoA, you trust that the authorized validators are sufficiently independent, accountable, and governable that they will not collude or fail together.

That means PoA security is partly technical and partly institutional. The technical side includes signature verification, turn-taking rules, fork choice, vote thresholds, checkpointing, and rate limits. The institutional side includes who selects the authorities, how identities are vetted, how key compromise is handled, what happens if a validator censors transactions, and whether there is credible recourse if authorities abuse their power.

This is why PoA is often described as reputation-based. The chain is not saying “this signer has burned resources” or “this signer has locked stake.” It is saying, more or less, “this signer is on the approved list, and the list itself is supposed to reflect some governance judgment about trustworthiness.”

That can be perfectly reasonable in a consortium where validators are known companies with contractual relationships. It is more contentious in a public network where users may expect neutral access and resistance to coordinated intervention.

What are the main risks and failure modes of Proof-of-Authority?

| Failure mode | Typical cause | Impact | Mitigation |

|---|---|---|---|

| Censorship | Majority collusion | Transaction censorship or rewrite | Broader, independent authorities |

| Key compromise | Stolen or leaked key | Unauthorized block signing | Rate limits, rapid removal |

| Cloning attack | Duplicate key instances | Conflicting histories, double-spend | Quorum tuning, clone detection |

| Emergency governance | Coordinated validator action | Fast fix but centralization risk | Clear rules and transparency |

Because PoA relies on a small authority set, failures can be sharper and more human-shaped than in large open validator systems. If enough authorities collude, they can censor transactions, reorder activity, or approve membership changes that entrench their own control. Ethereum’s developer documentation notes that censorship generally requires control of more than half of the signers in typical PoA setups, but that threshold should not be mistaken for comfort. On a small validator set, “more than half” may mean only a handful of organizations.

Compromised keys are another obvious risk. If an attacker steals a signer’s key, they inherit that signer’s authority until the network removes it. Protocol limits on minting frequency help reduce the blast radius, but they do not solve the underlying problem. Operational security around key management becomes central.

Network partitions create subtler risks. Research on Aura and Clique has shown that some PoA designs can be vulnerable to attacks that exploit duplicated keys and partitioned communication. In the so-called cloning attack, a malicious sealer runs multiple instances using the same signing key in different network partitions. Under the wrong conditions, both partitions can appear sufficiently valid for conflicting histories to be accepted, enabling double-spend-style outcomes. The broader lesson is not that every PoA network is always broken, but that **small-validator, schedule-based protocols have delicate safety and liveness assumptions. ** If those assumptions are underspecified or operationally violated, surprising failures can emerge.

Even when the base consensus works as intended, PoA-family networks make explicit the possibility of coordinated intervention. BNB Chain’s response to the 2022 Token Hub bridge exploit involved validator coordination to pause and upgrade the network. That incident was about a bridge verification bug, not a direct failure of base-chain consensus, but it still revealed a property relevant to authority-style systems: when the validator set is relatively small and coordinated, exceptional action is more feasible. Some users see that as a strength because it can limit damage quickly. Others see it as proof that the system is less credibly neutral.

Neither interpretation is purely rhetorical. They point to a real design tradeoff. The same structure that makes PoA operationally efficient also makes governance power more concentrated and more usable.

What PoA variants exist and how do they differ (Clique, Aura, IBFT, PoSA)?

| Variant | Scheduling | Finality | Sync friendliness | Best for |

|---|---|---|---|---|

| Clique (EIP-225) | Round-robin, in-turn bias | Weighted eventual finality | Header-only sync friendly | Ethereum testnets, private nets |

| Aura | Time-slot scheduling | Non-instant BFT finality | Moderate sync needs | Enterprise private chains |

| IBFT 2.0 | BFT vote rounds | Fast BFT finality | State-dependent sync | Permissioned chains needing finality |

| PoSA (BNB) | Stake-weighted authorities | Finality with FFG-like gadget | Moderate sync needs | High-throughput public chains |

“Proof of Authority” names a family resemblance more than a single universal protocol. The common principle is that a restricted set of known validators controls block production. But the details differ in ways that matter.

Clique, standardized in EIP-225, was designed to fit Ethereum clients with minimal disruption. It uses header-contained signatures, round-based scheduling, in-turn and out-of-turn block weighting, and header-embedded votes for signer changes. Its design strongly reflects a practical constraint: support existing client sync modes without introducing special dependence on smart contract state for validator tracking.

Aura, used in the OpenEthereum ecosystem, also relies on authority-based block production but exposes different operational parameters such as stepDuration, the minimum interval between blocks. That parameter sounds innocent, but it encodes a real systems tradeoff. If stepDuration is too low, normal clock drift or heavy blocks can cause reorgs and consensus instability. If it is too high, the network becomes sluggish. OpenEthereum documentation also supports validator sets defined statically or by contracts, making governance more customizable.

Some authority-based protocols push further toward Byzantine fault tolerant designs with stronger finality properties. IBFT 2.0, for example, belongs to that direction. And some public networks mix authority ideas with staking, as BNB Smart Chain’s PoSA does. So when someone says a chain uses PoA, the right next question is not “got it” but “which authority protocol, with what validator-set rules and what finality assumptions?”

When is Proof of Authority the right choice?

PoA makes the most sense when the blockchain’s value comes from shared execution and auditable coordination more than from open validator admission. If the participants already know they want a bounded validator set, PoA can remove a lot of unnecessary machinery.

That is why it is a natural fit for private enterprise networks, consortium chains, staging environments, and developer testnets. In those settings, the main goal is often to have multiple parties share a ledger without placing one operator fully in charge. PoA gives that multi-party structure while staying operationally light.

It can also make sense in some public systems when the designers consciously accept a narrower decentralization target in exchange for throughput, lower fees, and operational responsiveness. But in public settings the burden of explanation is higher. Users need to understand that the system’s security depends on a governance process around validator identity, not just abstract protocol math.

Conclusion

Proof of Authority is consensus by approved identity. A blockchain using PoA does not ask, “who spent the most computation?” or “who has the most stake at risk?” It asks, “which known validators are currently authorized to sign, and what rules govern their turns and their replacement?”

That shift makes PoA fast, cheap, and practical for networks where validator membership is intentionally restricted. It also means the system’s real security depends not only on code, but on governance, operator independence, key management, and the assumptions behind the authority set. If you remember one thing tomorrow, it should be this: **PoA works well when permission is the chosen source of Sybil resistance; and breaks down when people expect permissionless trust minimization from a permissioned design. **

What should you understand before using this part of crypto infrastructure?

Before using a Proof-of-Authority chain, confirm who the authorities are, how validator changes happen, and what finality model the project guarantees. On Cube Exchange, use that information to fund, trade, or transfer with protocols and timing that match PoA risks and governance behaviour.

- Open the chain’s docs or block explorer and record the consensus engine (e.g., Clique, Aura, IBFT), the current signer count, epoch length, and whether validator changes are header-embedded or contract-managed.

- Inspect recent blocks or governance records for signer add/remove votes and the last epoch checkpoint to see how fast membership changes have taken effect historically.

- Check security notes for PoA-specific risks: whether SIGNER_LIMIT or rate limits are used, any published cloning/partition warnings, and guidance on required confirmations or checkpoint waits.

- Deposit funds into your Cube account (fiat on‑ramp or token transfer) sized to match the chain risk you observed.

- Execute your trade or on‑chain transfer on Cube, choose order/transfer timing to allow for the project’s recommended checkpoints or confirmations, and monitor the project’s governance updates after the move.

Frequently Asked Questions

In PoA a node’s right to produce a block comes from being on the network’s approved validator list and by producing a valid signature for the block; Clique in particular embeds a secp256k1 signature in block headers so clients can recover the signer identity from headers alone, while normal block validity rules (transactions and state transitions) still apply.

Authority membership changes are typically done by votes recorded in block headers (Clique uses header fields like extraData, beneficiary and a special nonce), nodes tally those votes and when a proposal reaches the required threshold the signer set is updated, and epochs checkpoint and flush pending votes to keep history bounded - however, exact numeric thresholds and some ordering edge-cases are left to implementations and are not universally specified.

PoA schemes avoid continuous mining races by scheduling proposers (often round-robin) and resolving competing or out‑of‑turn blocks with fork-choice biases - Clique favors in‑turn blocks by giving them higher weight and also rate‑limits how often one signer may consecutively seal blocks (SIGNER_LIMIT = floor(SIGNER_COUNT/2) + 1) to cap damage from a single compromised key.

Unique PoA risks include concentrated governance (a small number of colluding authorities can censor or reorder transactions, typically requiring control of a majority), key compromise (an attacker with a signer key inherits its powers until removed), and cloning attacks where duplicated keys across partitions can enable conflicting valid histories; these risks are documented both in protocol notes and in academic analyses of Aura/Clique.

EIP-225 and Clique were designed to keep validator state inside headers so clients can verify who the signers are without replaying on‑chain contract state, which enables fast/light/warp synchronization and makes header-only verification practical for Light Client.

PoA is most appropriate when participants expect a bounded, known validator set - examples include private enterprise consortia, developer testnets, staging networks, and some public chains that accept a narrower decentralization target in exchange for throughput and operational control.

Finality and safety properties vary by PoA variant: some engines (IBFT-style) move toward instant/strong BFT finality, others are explicitly “non-instant BFT” with longer, probabilistic finality, and some platforms (e.g., VeChain’s documentation) report long deterministic finality windows - so you must read the specific engine’s guarantees rather than assuming a single finality model for all PoA systems.

There are no universal defaults for parameters like stepDuration, epoch length, or vote windows; implementers must tune them because choices matter (for example OpenEthereum warns that too low a stepDuration can cause reorgs), and some recommended numeric settings and tradeoffs are explicitly left unspecified by the specs and remain deployment decisions.

Authority-style networks make exceptional, coordinated actions (pauses, upgrades or rollbacks) operationally feasible because the validator set is small and coordinated, which can be used to limit damage in emergencies (as seen in BNB Chain’s coordinated pause/upgrade response), but that same feasibility is what leads some users to view PoA as less neutral and more intervention-capable than permissionless designs.

Related reading